Written by Matt Walsh | Reviewed and edited by Chris Singleton

In this ChatGPT statistics overview, you’ll find all the key figures about the chatbot that is currently taking the world by storm. From userbase size and running costs to investors and projected revenue, you’ll find a host of interesting ChatGPT statistics and facts below…

Please note: you are free to use any of the ChatGPT stats featured in this post in your own articles. However, we’d politely request that you credit Style Factory as a source and provide a link to this post when doing so. Thank you!

Article highlights

- What is ChatGPT?

- Who owns ChatGPT?

- How many people use ChatGPT?

- How much traffic does the ChatGPT website get?

- How much does it cost to run ChatGPT?

- How much does it cost to use ChatGPT?

- How long did it take to train ChatGPT?

- What was the size of the dataset used for training ChatGPT?

- What languages does ChatGPT understand?

- What is ChatGPT’s IQ?

- How accurate is ChatGPT?

- Does ChatGPT pass the Turing test?

- Where is ChatGPT available?

- Who are ChatGPT’s investors?

- What is OpenAI’s net worth?

- How big is the artificial intelligence market?

- What are the main competitors to ChatGPT?

- Will I lose my job because of ChatGPT?

What is ChatGPT?

- ChatGPT is a chatbot (or, in technical terms, a ‘large language model’) created by US-based artificial intelligence company OpenAI. When you ask ChatGPT a question, it draws on a huge body of textual data — large chunks of content found on the Internet, many books, all of Wikipedia — to provide you with a human-like answer to it. You can also ask ChatGPT to create content for you — it can write poems, create computer code, translate languages, provide article structures and do much else besides. (Source: OpenAI.)

Who owns ChatGPT?

- ChatGPT is owned by OpenAI, an artificial intelligence research laboratory based in San Francisco. The company consists of two parts: OpenAI Incorporated (non-profit) and OpenAI Limited Partnership (for-profit). This combination of a ‘non-profit’ and ‘for-profit’ company structure is reportedly in place to allow OpenAI to be able to legally attract investment from major investors, while giving company employees equity in the company. (Source: Wikipedia and Wired.)

How many people use ChatGPT?

- ChatGPT is the the fastest-growing consumer software application ever. It gained 100 million users within a few months its launch in Novemebr 2022. As of April 2025, it is reported that ChatGPT currently has more than 400 million weekly users. (Source: Reuters.)

- To put ChatGPT’s phenomenal user acquisition rate into perspective, it took TikTok nine months to achieve its first 100 million users. Other mega-successful apps took much much longer to do so — Facebook took almost 5 years; Canva took 9 years; and Instagram needed 2.5 years to reach 100 million users. This was a feat achieved by ChatGPT in a mere 60 days or so. (Sources: Reuters and Canva.)

- ChatGPT obtained 1 million users within 5 days of its initial launch in November 2022. The app that came closest to acquiring one million users this quickly is Instagram, which gained 1 million users after around two and a half months after launch — 70 days, or 1500% more slowly than ChatGPT. (Source: Statista.)

- In April 2025, ChatGPT’s website averaged over 134 million visitors every day. (Source: Similar Web.)

How much traffic does the ChatGPT website get?

- According to Semrush data, the Chat GPT website received

- We know that ChatGPT has over 400 million users, but traffic to its website is significantly higher than that. In April 2025, it is estimated that there were more than 4.6 billion visits to ChatGPT in total. (Source: Semrush.)

- ChatGPT’s 400+ million users are spread across the globe, but the country with the largest proportion of users is the United States, which is estimated to account for over 15% of them. India is thought to have the second largest ChatGPT userbase, accounting for an estimated 13.5%+ of all users. (Source: Similar Web.)

- It is estimated that 56% of social media traffic to ChatGPT starts on Reddit, compared to 18% on Facebook, 16% on YouTube, and 9% on Facebook. (Source: Similar Web.)

How much does it cost to run ChatGPT?

- Software industry experts have estimated that running costs for ChatGPT range somewhere between $100,000 and $700,000 per day or $3-$21 million per month. ChatGPT sits on top of Microsoft’s Azure Cloud infrastructure, which charges around $3 per hour for a single A100GPU super computer cluster. (Source: Tom Goldstein and Business Insider.)

- Given that the average ChatGPT answer is around 30 words, the company is estimated by some commentators to pay approximately one cent for every user query — this works out at about $0.03 per word generated by ChatGPT. (Source: Tom Goldstein.)

- OpenAI CEO Sam Altman is on the record as saying the running costs for ChatGPT are extremely high. In response to a Twitter user, he said: “we will have to monetize it somehow at some point; the compute costs are eye-watering“. (Source: Sam Altman.)

- Of course, server costs and a virtual supercomputer framework form only some of the costs associated with running ChatGPT. Another cost is paying the people who built it. At time of writing, OpenAI employs roughly 770 people. Employment website Glassdoor estimates that employee salaries at OpenAi range from $54Kper annum for the position of ‘IT support’ up to $370K for ‘Research Scientist’. However, other AI industry-watchers provide much larger salary estimates for OpenAI. For example, employment website Levels.fyi estimates that a level 6 software technician at OpenAi earns approximately $1.34 million per year, made up of a base salary of around $379K and stock options valued at $960K. (Sources: Wikipedia, Glassdoor and Levels.fyi.)

How much does it cost to use ChatGPT?

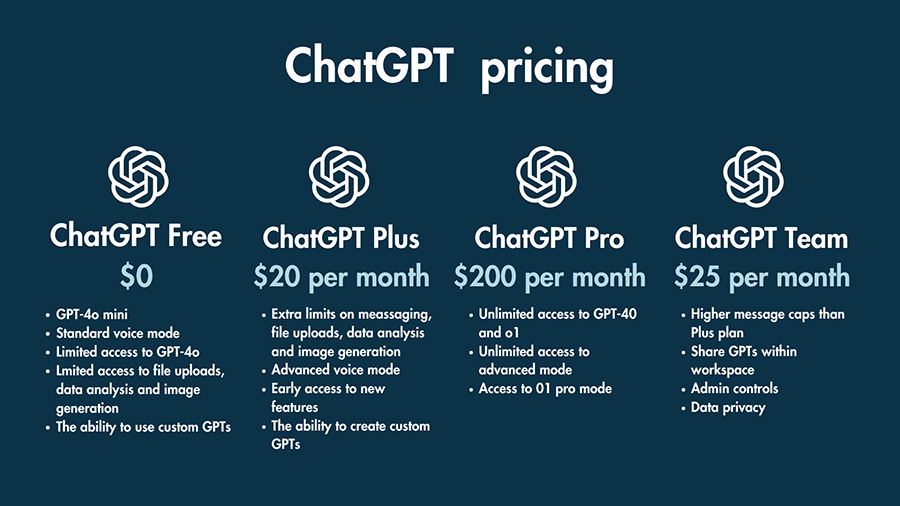

- There are 5 main pricing options for ChatGPT: a free version, ‘ChatGPT Plus’ ($20 per month), ‘ChatGPT Pro’ ($200 per month), ‘ChatGPT Teams’ ($25 per user per month) and ‘ChatGPT Enterprise’ (custom pricing). (Source: OpenAI.)

- The free version provides access to ‘GPT-4o mini,’ a lightweight variant of GPT-4 designed for basic tasks with limited capabilities compared to the full version. (Source: OpenAI.)

- ‘ChatGPT Plus,’ which involves a $20 per month fee, gives users access to the the full version of ChatGPT-4o. The ‘Plus’ plan also gives users access to custom GPTs, voice mode, a research preview of GPT4.5, and includes extended allowances with regard to messaging, file uploads and image generation. (Source: OpenAI.)

- ‘ChatGPT Pro’ ($200 per month)provides unlimited access to both GPT-4o and GPT-o1. It also offers a ‘pro mode’, which uses extra computing power to provide the best answers to the hardest questions you input into the tool. (Source: OpenAI.)

- The ChatGPT ‘Team’ plan provides workspace management features to allow multiple users collaborate via ChatGPT. This plan costs $25 per user per month when paying annually (or $30 per month on a month-by-month basis). Any data that team members input into ChatGPT via their workspace is excluded from ChatGPT’s training dataset. (Source: Open AI.).

How long did it take to train ChatGPT?

- Some ChatGPT commentators have estimated that if ChatGPT was to be trained on a single NVIDIA Tesla V100 ‘Graphics Processing Unit’ (GPU) that it would take around 355 years to complete ChatGPT’s training on its training dataset. However, OpenAI reportedly used 1,023 A100 GPUs to train ChatGPT, so it is possible that the training process was completed in as little as 34 days. (Source: Lambda Labs.)

- The costs of training ChatGPT is estimated to be just under $5 million dollars. (Source: Lambda Labs.)

What was the size of the dataset used for training ChatGPT?

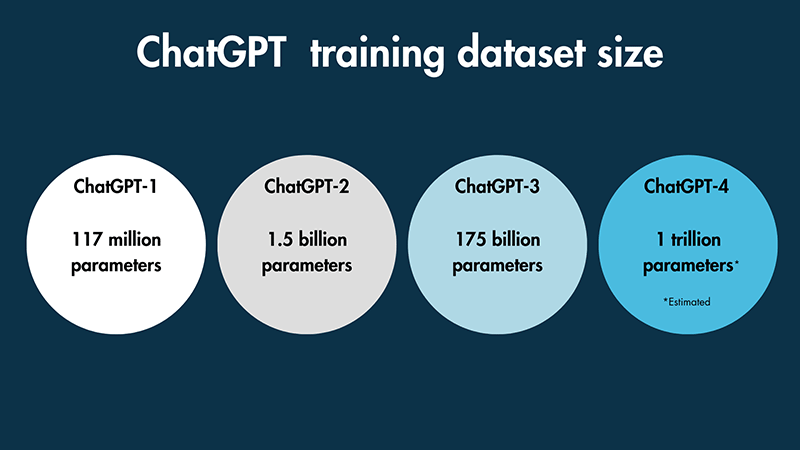

- The dataset for training ChatGPT-4 — the latest version of ChatGPT — is estimated to consist of 1 trillion parameters, more than 5 times larger than the training data for ChatGPT-3. (Source: Wired and OpenAI.)

- Chat-GPT-4 training data included feedback from users of ChatGPT-3 as well as feedback from over 50 AI safety and security experts. (Source: OpenAI.)

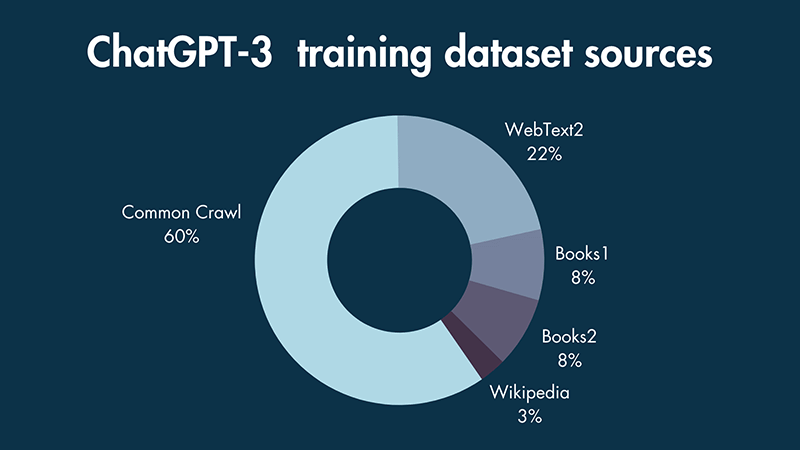

- ChatGPT-3’s dataset comprised textual data from 5 sources, each with a different proportional weighting. (Source: OpenAI.)

- 60% of ChatGPT-3’s dataset was based on a filtered version of what is known as ‘common crawl’ data, which consists of web page data, metadata extracts and text extracts from over 8 years of web crawling. (Source: OpenAI.)

- 22% of ChatGPT-3’s dataset came from ‘WebText2’, which consists of Reddit posts that have three or more upvotes. (Source: OpenAI.)

- 16% of ChatGPT-3’s dataset come from two Internet-based book collections. These books included fiction, non-fiction and also a wide range of academic articles. (Source: OpenAI.)

- 3% of ChatGPT-3’s dataset comes from the English-language version of Wikipedia. (Source: OpenAI.)

- 93% of ChatGPT-3’s data set was in English (Source: OpenAI.)

How much of ChatGPT’s training was done by humans?

- OpenAI’s 2020 paper on ChatGPT-3, ‘Language Models are Few-Shot Learners’ was authored by 21 different people from the OpenAI team. (Source: OpenAI.)

- Additionally, 780 people were recruited to participate in experiments to assess whether or not humans could detect that ChatGPT-3’s answers were generated by humans or by a computer model. (Source: OpenAI.)

- All in all, it is likely that more than 800 people were involved in the creation of ChatGPT-3.

Is there a ChatGPT app?

- There is an app version of ChatGPT available for both iOS and Android devices.

- Open AI launched an app version of ChatGPT for iOS users on 18th May 2023. This free app was initially only available to users in the United States, but its availability has since been expanded to another 40+ countries, including Albania, Croatia, France, Germany, Ireland, Jamaica, New Zealand, Nicaragua, Nigeria, South Korea and the U.K. (Source: Techcrunch.)

- The iOS ChatGPT app was downloaded more than 500,000 times during its first week on the Apple App store. (Source: Data.ai.)

- The iOS ChatGPT app currently has a user rating of 4.9 stars out of 5, receiving a total of 2.2+ million ratings so far. (Source: Apple.)

- At time of writing, the iOS ChatGPT app is ranked 1st in the ‘Productivity’ category in the Apple App Store — one place ahead of Gmail.. (Source: Apple.)

- An Android version of the ChatGPT app was launched in July 2023. At time of writing, it has been downloaded over 100 million times, and is currently rated 4.8 stars out of 5 from more than 15 million user reviews in the Google Play Store.

What languages does ChatGPT understand?

- ChatGPT is designed to primarily work in English, but it also understands certain inputs in around 95 other natural human languages, including Spanish, French, Chinese, German and Irish. (Source: SEO.ai.)

- ChatGPT was primarily written in the Python computer programming language. However, because it was exposed to various other coding languages as part of its training dataset, ChatGPT is also capable of understanding and producing responses in a wide range of computer languages. These include:

- Python

- JavaScript

- C++

- C#

- Java

- Ruby

- PHP

- Go

- Swift

- TypeScript

- SQL

- Shell

(Source: SEO.ai.)

What is ChatGPT’s IQ?

- Opinions on the IQ of ChatGPT vary from a slightly below-average IQ score of 83 to a ninety-nine percentile busting IQ of 147. (Source: Fast Company and David Rozado.)

- Some ChatGPT users have asked the tool to provide different IQ-level answers to questions (with interesting results). When asked for a 200 IQ answer to the question ‘Why does it rain?’, ChatGPT provided a perfectly-structured, well-articulated and information-packed response. But when it was asked to pretend to be a person with an IQ of 50 and to answer the same question, it responded: “it rain because the sky cry, it get sad and the tear fall down on the ground. That how it make everything all wet.” (Source: @halkatov on Twitter.)

How long does it take ChatGPT to answer a question?

The short answer is: it depends on the question / request.

- In response to my question ‘What is artificial intelligence?’, ChatGPT started to answer within 0.5 seconds, and within 13 seconds it had provided me with a complete 67 word answer with a high degree of accuracy.

- I then gave ChatGPT a slightly longer request: “explain to me how the human brain works.’ ChatGPT took longer to get going with this answer (3 seconds), and it took 31 seconds in total to provide a 178 word answer. This answer was also very accurate.

- Lastly, I gave ChatGPT the following request: “write me a short novel in the style of William Faulkner’s ‘The Sound and the Fury’. In this case, it took ChatGPT 7 seconds before commencing its answer. It ultimately took 70 seconds to produce a 354 word answer to this prompt. Unfortunately, its response did not (in my view) accurately respond to my request. Instead of a novel, ChatGPT produced a basic summary of the actual novel ‘The Sound and The Fury’, which was written in plain and correct English — a style very far removed from the literary approach of the novel in question.

How accurate is ChatGPT?

The Internet is filled with reports of ChatGPT failing to provide accurate data in response to user prompts. For example, it was reported that ChatGPT-3 was incapable of providing a correct response to a question about how many times Argentina had won the World Cup. It answered ‘twice’ when the correct answer should have been ‘three times.’ This can be explained by the fact that ChatGPT’s training data only spans until 2021, at which time Argentina had won the World Cup twice. Argentina went on to win the competition for a third time in 2022.

In OpenAI’s 2022 research paper, many ChatGPT limitations are acknowledged by its parent company.

The very first limitation mentioned by OpenAI on the ChatGPT web page is with regard to factual inaccuracy. The company states:

“ChatGPT sometimes writes plausible-sounding but incorrect or nonsensical answers. Fixing this issue is challenging, as: (1) during RL training, there’s currently no source of truth; (2) training the model to be more cautious causes it to decline questions that it can answer correctly; and (3) supervised training misleads the model because the ideal answer depends on what the model knows, rather than what the human demonstrator knows.”

— OpenAI

Other limitations of ChatGPT include:

- excessive repetition in answers

- the language model’s difficulty with ‘common sense physics’

- problems with recognizing whether or not one sentence implies another sentence

- a much higher variance compared to humans on standard benchmarks

- the provision of biased answers (which can be put down to its human-generated input data / human AI trainers).

OpenAI claims that ChatGPT-4 — the latest version of ChatGPT — is around 40% more likely to produce factual responses to prompts than its predecessor Chat-GPT-3. The company also claims that ChatGPT-4 is 82% less likely to respond to requests for disallowed content than ChatGPT-3. (Source: OpenAI.)

How many applications does ChatGPT have?

Too many to be able to mention them all!

Here are just a few of the ‘natural language processing’ things you can use the ChatGPT model for, though:

- writing college essays

- creating legal documents

- writing computer code

- doing mathematics

- writing emails

- creating chatbots

- translating languages

- correcting texts

- creating marketing content

- recommending content to users based on their past preferences

- creating a text-to-speech system.

Does ChatGPT pass the Turing test?

- The Turing test — named after British mathematician and computer scientist Alan Turing — is a practical test to determine if a computer can pass as a human in a conversation with a real person. When you ask ChatGPT if it is capable of passing the Turing test, the answer from the chatbot is a definitive ‘No!’ — it responds:

“The Turing test, proposed by the British mathematician and computer scientist Alan Turing, is a test of a machine’s ability to exhibit intelligent behavior that is indistinguishable from that of a human. In the traditional Turing test setup, a human evaluator engages in a conversation with both a machine and a human without knowing which is which. If the evaluator cannot consistently differentiate between the human and the machine based on their responses, the machine is said to have passed the Turing test.

As an AI language model, my purpose is to generate human-like responses to text inputs. While I can provide coherent and contextually relevant answers, there may still be instances where my responses reveal a lack of understanding or fail to accurately mimic human thought processes. Passing the Turing test is a challenging benchmark for AI, as it requires not only linguistic fluency but also the ability to demonstrate deep comprehension, nuanced reasoning, and emotional intelligence—areas where AI systems are still evolving. Nevertheless, I continually strive to improve and provide meaningful interactions that approximate human-like conversation.

(Source: ChatGPT.)

- However, some humans disagree with ChatGPT on this. According to Buzzfeed data scientist Max Woolf, ChatGPT has indeed passed the Turing Test by conveying itself as indistinguishable in terms of its language use and responses from a real person to a panel of judges. (Source: Max Woolf.)

Where is ChatGPT available?

- ChatGPT is available in around 188 countries around the world. (Source: OpenAI.)

- However, users in China, Iran, North Korea, Russia, Venezuela and Belarus are reportedly unable to access the program. (Source: Video Gamer.)

When was OpenAI founded?

- OpenAi was founded in San Francisco in 2015 by Sam Altman, Trevor Blackwell, Greg Brockman, Vicki Cheung, Reid Hoffman, Andrej Karpathy, Durk Kingma, Jessica Livingston, Elon Musk, John Schulman, Ilya Sutskever, Peter Thiel, Pamela Vagata and Wojciech Zaremba. The fourteen founders are reported to have collectively pledged $1 billion dollars at the time of the company’s launch. (Source: Wikipedia and Vanity Fair.)

Who are ChatGPT’s investors?

- OpenAI has received investment from many different companies over the last seven years. Microsoft is by far its biggest backer, investing $1 billion into the company in 2019 with a $2 billion in 2021 and a further investment of $10 billion in January 2023. (Source: CNBC.)

- In October 2024, OpenAI raised $6.6 billion in a funding round led by Thrive Capital, with participation from Nvidia, SoftBank, and others, valuing the company at approximately $157 billion. This brings OpenAI’s total funding to around $19.6 billion, with Microsoft’s contributions totaling $13 billion. These investments have enabled OpenAI to advance its AI research and development, maintaining its position as a leader in the field. (Sources: Microsoft, NBC, Wikipedia.)

- It is reported that Microsoft’s investments in OpenAI give it a 49% stake in the company. Microsoft is expected to receive three-quarters of openAI’s profits until it recovers its investment. (Source: CNBC.)

- Microsoft shares have increased by more than 50% since its investment in OpenAI. (Source: Reuters.)

- Other investors in OpenAI such as Khosla Ventures are also reported to own a 49% stake in the company, with the remaining 2% being retained by OpenAI in equity. (Source: CNBC.)

- Even before Microsoft’s latest investment in ChatGPT’s parent company, OpenAI was the best-funded machine learning AI platform in the world. Its nearest competitor in terms of funding raised is Scale AI, which has raised $602 million in funding to date. (Source: Statista.)

- In April 2023, venture capitalist firms including Sequoia Capital, Andreessen Horowitz, Thrive and K2 Global picked up an estimated $300 million shares in OpenAI. (Source: Techcrunch.)

- As of April 2025, OpenAI is considering a transition to a public benefit corporation (PBC) structure. This proposed change aims to allow greater flexibility in attracting investment while still prioritizing public benefit. However, the move has faced criticism from former employees, AI experts, and legal scholars, who argue that it could compromise OpenAI’s foundational mission to develop artificial general intelligence (AGI) that benefits all of humanity. (Source: Time.)

What is OpenAI’s net worth?

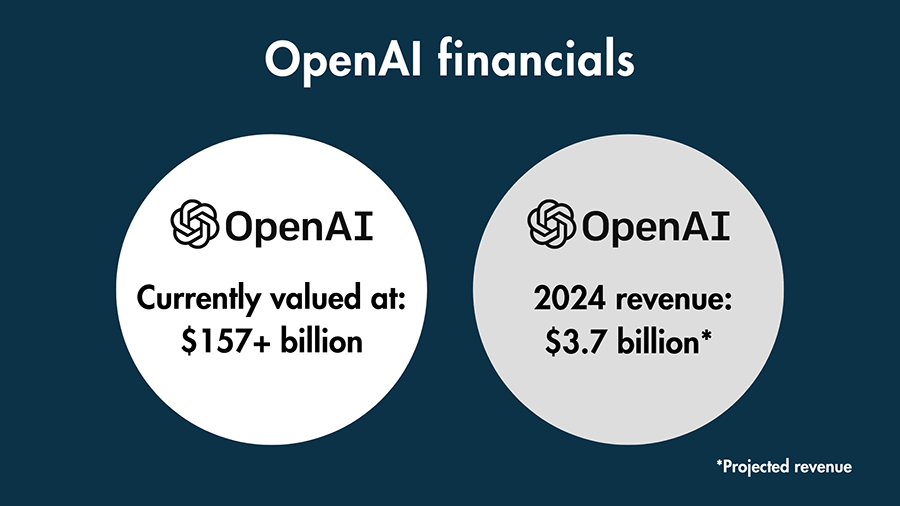

- OpenAI is currently thought to be valued at more than $157 billion. (Source: Yahoo Finance.)

- In 2023, OpenAI brought in more than $1.6 billion in revenue, with sales growing at almost 50 times the rate of its sales in 2022.. (Source: Seeking Alpha.)

- Some commentators estimate that OpenAI may have lost around $540 million in 2022, more than double its losses in 2021. (Source: The Information.)

- OpenAI CEO Sam Altman stated in June 2023 that there are no plans to make OpenAI a publicly-traded company. (Source: Reuters.)

- In 2024, OpenAI’s revenue experienced significant growth. In June, CEO Sam Altman reported an annualized revenue of $3.4 billion, a substantial increase from $1.6 billion in late 2023. By the end of 2024, OpenAI projected total revenue of approximately $3.7 billion. Despite this impressive revenue growth, the company anticipated a loss of about $5 billion for the year, primarily due to high operational costs, including expenses related to computing power sourced through its partnership with Microsoft. (Source: Yahoo Finance.)

How many different sectors work with OpenAI?

- According to Statista, 27% of companies using ChatGPT are technology companies; 23% of them are in the educational sector; 10.9% are classified as business companies and just under 10% of them are manufacturing companies. (Source: Statista.)

- Some reports indicate that ChatGPT is used currently by 49% of companies in the United States. (Source: ResumeBuilder.)

- OpenAI CEO Sam Altman has stated that more than 92% of Fortune 500 companies use ChatGPT. (Source: Techcrunch.)

How many people use ChatGPT at work?

- Recent studies show a significant rise in workplace use of ChatGPT and similar AI tools. A 2025 global survey by KPMG and the University of Melbourne, covering over 48,000 individuals across 47 countries, found that 58% of workers intentionally use AI at work. Around one-third do so weekly. However, 57% admitted to concealing their AI use from employers. 48% had uploaded company data into public AI tools, raising serious data security concerns. DeskTime reported that by the end of 2024, 76% of global offices had integrated ChatGPT into workflows. Individual employee usage rose to 34.9%, up from 19.1% a year earlier. Adoption was particularly high in India at 92%. In the U.S., a Pew Research Center survey from early 2024 showed that 23% of adults had used ChatGPT, an increase from 18% in mid-2023. Usage was notably higher among those under 30, with 43% reporting experience with the tool. These findings highlight the rapid adoption of AI tools in professional settings alongside growing concerns around transparency and data privacy. (Sorces: Business Insider, DeskTime, Pew Research Center.)

Who uses ChatGPT more, men or women?

- According to research carried out by Enterprise Apps Today, 65.68% of ChatGPT users are male and 34.32% of users are female. (Source: Enterprise Apps Today.)

Can ChatGPT search the web?

- ChatGPT’s web search feature was introduced on October 31, 2024, allowing users to receive up-to-date information directly within the chat interface. (Source: OpenAI.)

- Initially available to ChatGPT Plus and Team subscribers, the web search feature was expanded to all users, including those on the free tier, on December 16, 2024. (Source: The Verge.)

- The web search functionality enables ChatGPT to provide real-time information such as news updates, stock quotes, and weather forecasts, enhancing its ability to deliver timely and relevant responses. (Source: OpenAI.)

- As of April 2025, ChatGPT’s web search feature is available to all users in regions where ChatGPT is accessible, ensuring a broad global reach. (Source: OpenAI.)

How many user-built GPTs are there?

- ChatGPT users have created more than 3 million ‘GPTs’. These are custom versions of ChatGPT that can be used to perform specific tasks such as learn a language, synthesize results from academic papers and many many more. Many of these user-generated GPTs are available in the ‘GPT Store‘. (Source: OpenAI.)

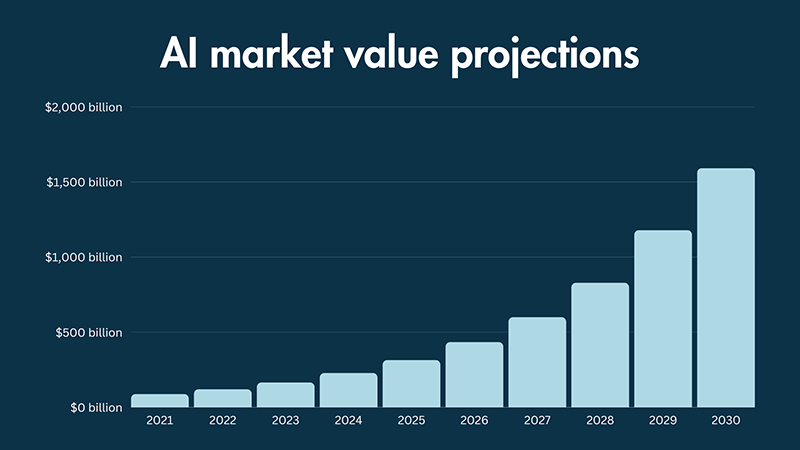

How big is the artificial intelligence market?

- The overall value of the AI market is worth $207 billion, and is forecast to grow to to as much as $1.5 trillion by 2030 (Source: Statista & Precedence Research.)

What are the main competitors to ChatGPT?

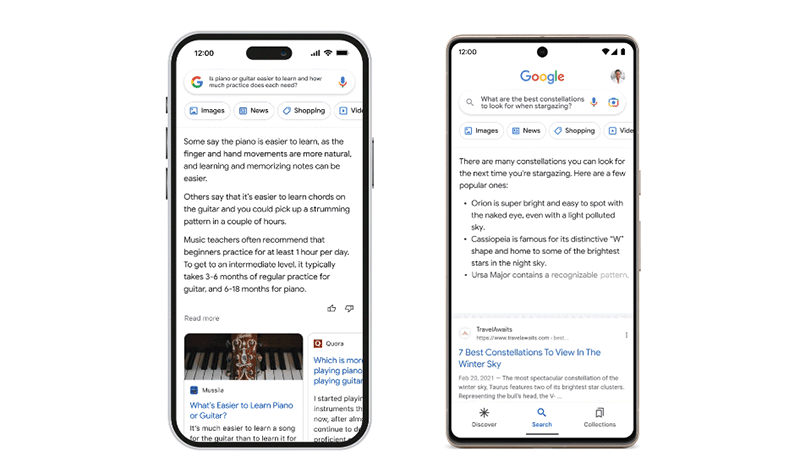

- Google launched early access to its own conversation-based AI application, ‘Bard’, on 21 March 2023. Bard — like ChatGPT — provides searchers with synthesized information in the form of direct answers to their questions and requests. (Source: Google.)

- Google Bard’s live event resulted in a significant loss of stocks for the company, amounting to a 7% reduction, due to a wrong answer generated by the platform. The company promptly removed the video of the live event from YouTube after the Bard AI provided an incorrect response. (Source: Dexerto.)

- Google Bard is currently available in over 40 languages, including in English, Japanese,Arabic, Chinese, German, Hindi, and Spanish. (Source: Techcrunch.)

- Google recently introduced Gemini 2.0, a powerful update to its AI model, enhancing its ability to understand and generate text, images, audio, and video. This version includes “agentic” features, enabling it to plan and execute tasks autonomously across platforms like web browsers and mobile devices. The Gemini 2.0 lineup consists of various versions, such as Gemini 2.0 Flash, optimized for real-time audio and video interactions, and Gemini 1.5 Pro, designed for complex reasoning and handling large datasets. Additionally, Gemini 1.5 Flash offers versatile performance for diverse tasks. Gemini 2.0 excels in visual reasoning and multimodal tasks, with benchmarks showing performance comparable to or surpassing leading models like GPT-4. Integrated into Google services like Search and Workspace, Gemini 2.0 also powers experimental projects such as Astra and Mariner, demonstrating its capabilities in real-time assistance and web navigation. (Sources: Google, Wired, The Verge.)

- On August 31st, 2023, Chinese tech giant Baidu released its chatbot named ‘Ernie’ — short for ‘Enhanced Representation through Knowledge Integration’. ChatGPT is not officially available in China. ‘Ernie’ currently has a userbase of around 300 million people. (Source: CNBC & Reuters.)

- Microsoft’s ‘Copilot’ AI tool is currently being rolled out across its Microsoft 365, Surface and Windows products. Copilot can be added to Microsoft 365 at a cost of $30 per user per month (or $360 for an annual account).

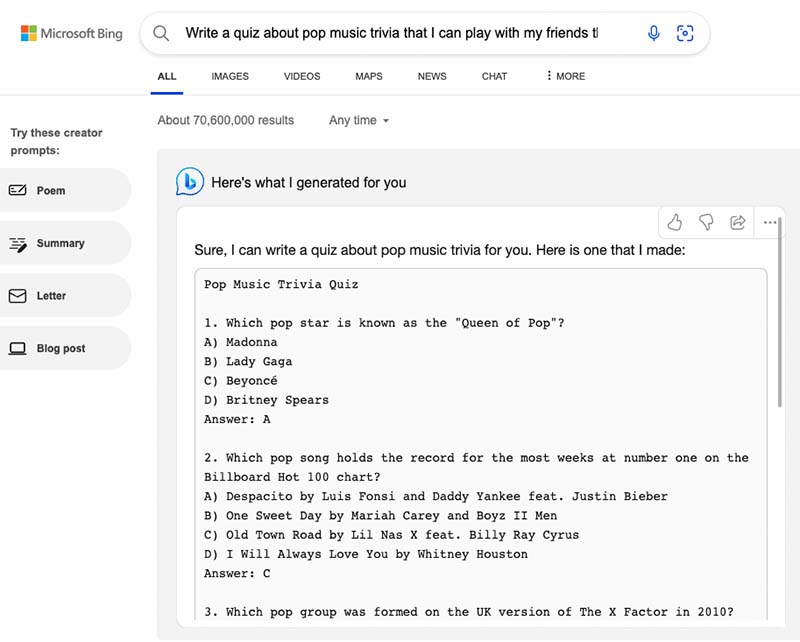

- The Bing search engine has included an integration with ChatGPT’s AI technology since March 2023. At tie of writing (April 2025), Microsoft’s Bing search engine has increased its market share of the global search market to 4%. (Source: Statcounter.)

- China’s Alibaba recently unveiled a new generative AI model called ‘Tongyi Qianwen’, which translates into English as “truth from a thousand questions”. This technology is being rolled out across AliBaba’s voice assistant and workplace messaging apps before being integrated into AliBaba’s wider suite of apps. (Source: Reuters.)

- Apple is developing a generative AI platform —codenamed ‘Ajax’ — built on top of Google Cloud’s JAX machine learning accelerator. (Source: Techmonitor.)

- Meta’s open-source Llama AI model was first released in February 2023 with 7, 13, and 65 billion parameter versions, integrated into the Meta AI assistant on Facebook, Instagram, and WhatsApp. Subsequent updates included Llama 2 in July 2023 (7, 13, and 70 billion parameters) and Llama 3 in April 2024 (8, 70, and 405 billion parameters). By September 2024, Llama 3.2 introduced image processing, and Llama 3.3, released in December 2024, delivered enhanced performance at lower computational costs, demonstrating Meta’s rapid advancements in AI technology.. (Source: Wikipedia.)

- DeepSeek is a new family of open-source AI models created by Chinese research lab DeepSeek-AI. It has quickly gained attention for its strong performance, especially compared to other well-known models like Meta’s Llama 3 and OpenAI’s earlier GPT models. Released in stages through 2024 and 2025, DeepSeek models have been praised for their ability to handle both regular conversation tasks and more technical jobs like coding. DeepSeek-V2.5, one of the latest versions, combines the strengths of its earlier models to offer better instruction-following, longer memory (called “context length”), and faster, more accurate answers. Unlike some AI tools that are locked behind paywalls, DeepSeek models are openly available to download and use, which has helped them spread quickly among researchers, developers, and startups. Many media outlets and AI experts have called DeepSeek one of the most serious open-source competitors to GPT-4, especially after DeepSeek-V3’s release, which demonstrated performance levels close to or even better than some paid, closed models. (Sources: DeepSeek, Business Insider)

Will I lose my job because of ChatGPT?

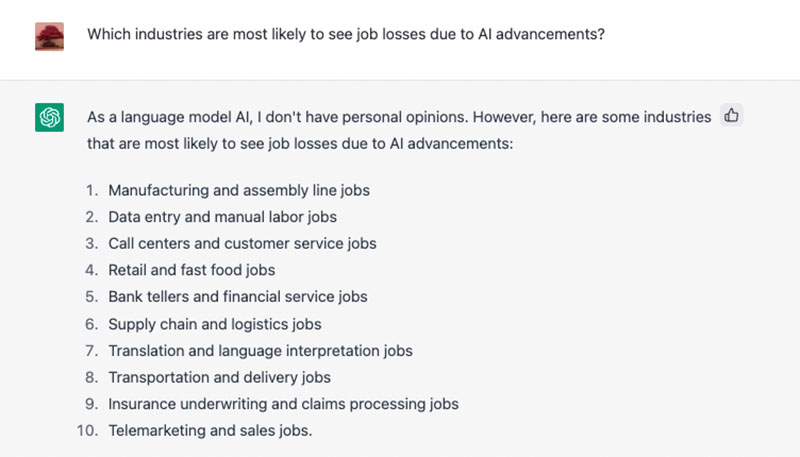

- In 2013, a university of Oxford study estimated that 47% of the U&S workforce could potentially be replaced by AI over the next few decades. Since the stratospheric rise of ChatGPT over the three months, employees in industries across customer service, education, content creation, search engine optimization (SEO) and many more are wondering if their sector will be hit by job losses due to recent AI advancements.

- In a March 2023 research paper, OpenAI reports that “80% of the U.S. workforce could have at least 10% of their work tasks affected by the introduction of GPTs, while around 19% of workers may see at least 50% of their tasks impacted”. (Source: OpenAI.)

- Sectors likely to be particularly affected by AI advancements include tech, media, law, market research and finance (Source: Business Insider.)

- Examples of jobs likely to be affected significantly by AI include programmers; journalists; paralegals, legal assistants; market researchers; educators; financial analysts, personal financial advisors; traders; graphic designers; accountants; and customer service agents. (Source: Business Insider.)

- Interestingly, when you ask ChatGPT which industries are most likely to see job losses due to AI, it suggests that the most at-risk jobs are those involving a large amount of repetitive tasks (for example those in manufacturing, customer service, translation, data entry and logistics).

How aware are people of ChatGPT?

- Awareness and usage of ChatGPT among U.S. adults have grown significantly over the past year. Earlier Statista research estimated that around 45% of U.S. adults had seen, read, or heard about ChatGPT, with awareness highest among Gen Z and Millennials. However, newer data shows a clear increase. A national survey by Elon University’s Imagining the Digital Future Center in January 2025 found that 52% of U.S. adults now use large language models like ChatGPT, highlighting the rapid pace of adoption across generations. Supporting this trend, a Pew Research Center survey from early 2024 showed that 23% of U.S. adults had personally used ChatGPT, up from 18% just six months earlier. Together, these figures demonstrate not only rising awareness but also a growing reliance on AI tools like ChatGPT in everyday life. (Sources: Elon University, Pew Research Center.)

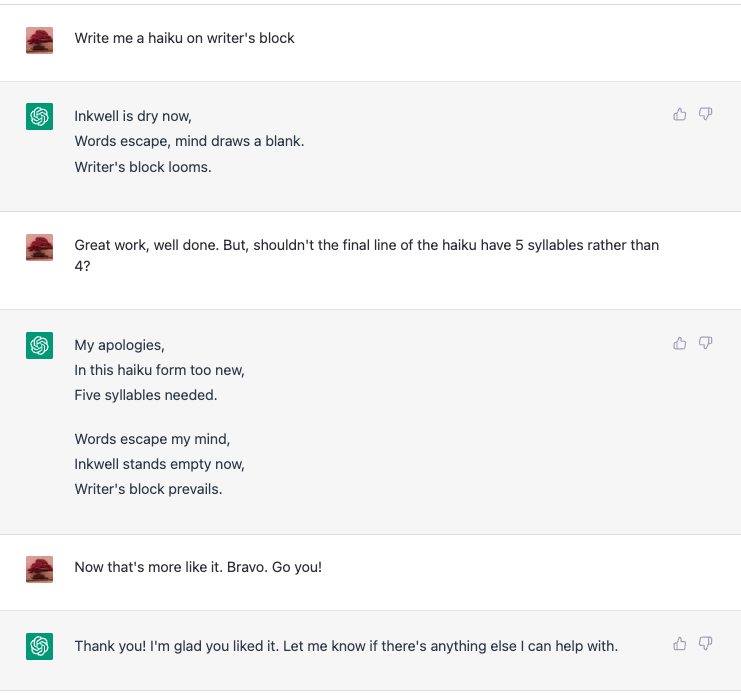

Can ChatGPT write a decent haiku?

- Well, I asked ChatGPT to write a haiku on the the topic of writer’s block. It made a pretty good attempt at it. I then pointed out a flaw in the syllable count of the third line of ChatGPT’s haiku attempt. The program then apologised to me (in haiku form!) and produced another haiku on writer’s block that (almost!) respects the 5-7-5 syllable count of classical haikus.

Related resources from Style Factory

We hope you’ve found this deep dive into ChatGPT statistics useful! If you’re interested in learning more about the platform or accessing more stats from Style Factory, please check out the resources below.

No comments